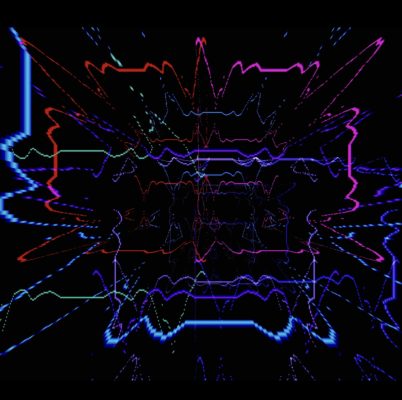

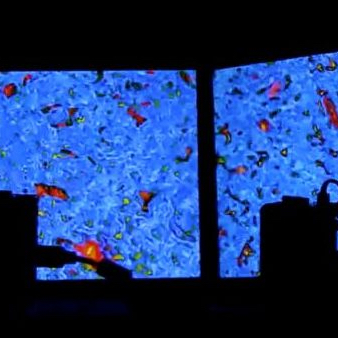

PiAv is a multi-sensory piece driven by stochastic processes to create an ever-changing audio-visual experience. The complete system is cross-pollinated: Combinations of pitch-clusters and samples influence the abstracted visual images created from code inspired by traditional analog video feedback techniques. In return, the color spectra and motion velocity within the visual images influence the sound processing creating a meta-feedback system. The piece cycles through a series of scenes that vary from dronelike, contemplative clusters to riotous bursts of sound and energy.

PiAv is a multi-sensory piece driven by stochastic processes to create an ever-changing audio-visual experience. The complete system is cross-pollinated: Combinations of pitch-clusters and samples influence the abstracted visual images created from code inspired by traditional analog video feedback techniques. In return, the color spectra and motion velocity within the visual images influence the sound processing creating a meta-feedback system. The piece cycles through a series of scenes that vary from dronelike, contemplative clusters to riotous bursts of sound and energy.

PiAv uses ten networked Raspberry Pi computers. Eight custom-made speakers with embedded Raspberry Pi computers run networked Pure Data patches to provide a continually evolving soundscape. The visual images are created using two Raspberry Pi camera modules, video feedback, and custom Python scripts for video processing. A laptop running Max/MSP serves as a central messaging hub.